Living Capsules, 2022 -2023

This page is dedicated to a series of exhibitions and workshops that have been born from our art-sociology collaboration, as part of the Biohybrid Bodies project.

We embarked on a journey to explore the possibilities of coexisting with Living Machines, combining our sensory approaches with creative and sociological imaginations. Our goal was to delve into the realm of new biohybrid technologies and their transformative impact on the distinction between living and non-living entities. Our primary investigation revolves around understanding how we perceive and navigate these blurred boundaries, which led us to create an immersive artistic experience.

Techniques studied: Multisensory experience and performance, data sonification, the landscape of sound, microscopic image, voice recording, and AI images.

SOME OF OUR OUTCOMES ARE LISTED BELOW

1. PARTICIPATORY PERFORMANCE: Biohybrid Buzz: an activation through tasting frequencies.

In this piece, participation takes place in an immersive 3D audio experience that combines data sonification and sound spatialization, revealing the connections between biohybrid bodies, human senses, and living machines. During this exploration, participants will have the opportunity to savor a capsule made with the Brazilian flower known as Jambu, Electric Daisy, or Buzz Buttons, which has the peculiar characteristic of inducing mouth vibrations. Simultaneously, there is an enveloping surround sound experience with low frequencies, providing a unique sensory experience. This experience will simultaneously activate the receptors present in the mouth and skin, merging two senses into a singular sensation: vibration.

Future dates and places:

[10th of June 2023 LONDON] (further details & tickets here)

[15th of June 2023 OXFORD] (further details & tickets here)

Sound Lab, Royal College of Arts, RCA, UK.

2. WORKSHOP: Data sonification and Living Machines

In this workshop, we will share our recent research in collaboration with sound artist Nikolas Gomes, focusing on the combination of new practices through material and sensory engagements. Participants will explore data sonification techniques and have the opportunity to create and compose sound pieces based on the revealed data.

Future dates and places:

[20th of May 2023] PARIS (more information here)

[10th of June 2023 LONDON] (further details & tickets here)

[12th of June 2023 OXFORD] (scheduled)

[Sep 2023 BARCELONA] - (To be confirmed)

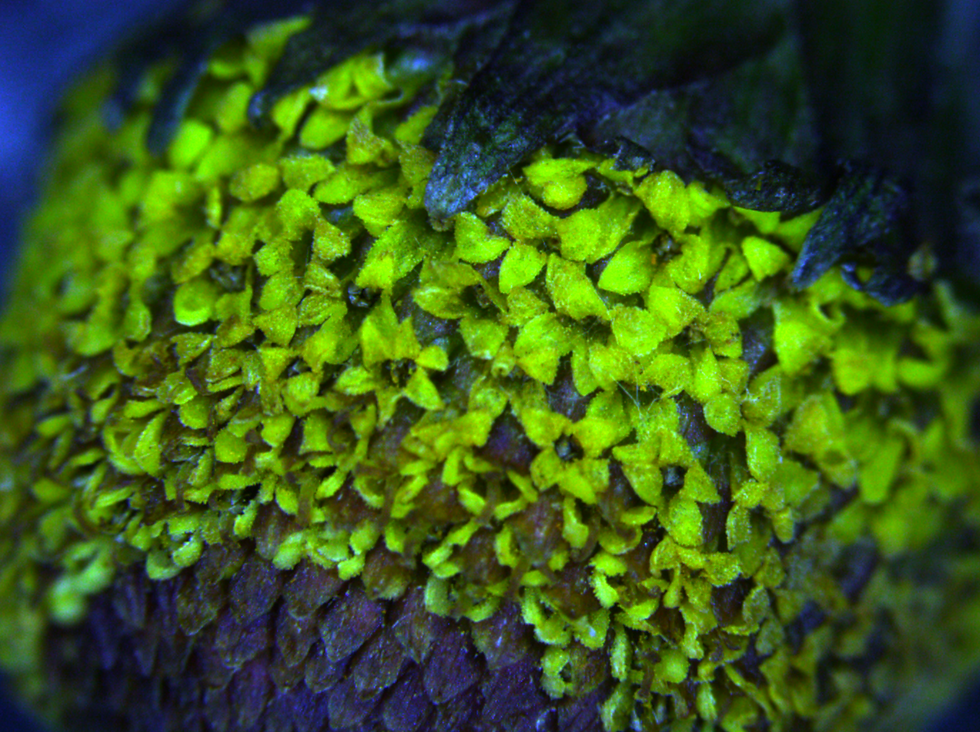

3. Imagens microscópicas da Flor de Jambu

Collaborator: Dr. Tchern Lenn

(Microscopy and Imaging Technician Cancer Research UK, UCL)

On November 16, 2022, we were warmly welcomed by Dr. Tchern Lenn, Microscopy and Imaging Technician, in his Laboratory. After an initial conversation and a brief explanation about the project, he presented us with two microscopic visualizations of the Jambu flower. It was an intense and contemplative experience to closely observe the morphology, cellular composition, pistils, plant tissues, and other elements present in the natural structure of Jambu. The captured material was later enhanced by adding cyclic movements to the static images.

4. DATA SCIENCE STUDIES

Collaborators: Dr. Xueming Xia e Dr. Han Wu (Department of Chemical Engineering, UCL)

At the end of 2022, we had the privilege of learning about four distinct techniques through the expertise of Dr. Xueming Xia, a specialist in research instrumentation, and Dr. Han Wu, the manager of the Laboratories at the Centre for Nature Inspired Engineering. During these visits, we had the opportunity to explore the inner waves of Jambu, its chemical components, and a vast amount of data that was later translated into sound through the practice of data sonification.

The techniques used were:

UV-Vis, Visible spectrophotometry - measures the absorption of light by the element (in this case, blue/cyan light).

FTIR, Fourier-transform infrared spectroscopy - analyzes the infrared absorption spectrum of a sample.

TGA, Thermogravimetric analysis - shows the weight loss of the sample as it is heated.

GC, Gas Chromatography - uses gas to separate elements for analysis.

Below, you can listen to how the data tables sound like.

5. DATA SONIFICATION TECHNIQUES

Based on the two questions: a) Is there any way to treat and process the table of data without so much programming and/or repetitive work? and b) Is there any way to transform data into sound in a more playful way that facilitates trial and error? Nikolas Gomes created an, I application for data sonification. This application needed to have a graphical interface that facilitates the use for users with no background in programming, as well as how to allow the loading of ready-made audios (samples) to serve as material sound for sonification. The first version of the software was developed with the main objective of sonifying the chemical and molecular data of the flower and fruit of Jambu, collected in the laboratory by Burdand Barker. This data was displayed in tables in .csv format, which can be loaded into SuperCollider using the CSVFileReader function.

6. FOTOGRAFIA ESPECULATIVA

Collaborators: Ram Shergill (photographer)

Daen Palma Huse (model) & Luiza Kessler (model and performer)

We’ve also worked with Ram Shergill - a photographer and critical posthuman bodying researcher. Following conceptual discussions, we brought prototype Living Capsules to a photoshoot where models experimented and posed with them. With a playful tone and experimental mood, we sought to discover and capture intimate/sensory body-capsule moments, penetrate/blur body-capsule boundaries, and create hybrid aesthetics between technology/nature.

7. OTHER PROTOTYPES

We are still prototyping other projects with the ideas of Living Capsules and a couple of publications that may be shared in the near future.

Support:

Leverhulme Trust, Santander Investigación, and European Social Fund, from 'AGAUR' Agency for Management of University and Research Grants, Department of Business and Knowledge of Catalunya, Spain.

Collaborators:

Antônia Spohr Moro (Producer)

Dr. Carey Jewitt (Research Supervisor, UCL)

Dr. Han Wu (Scientific Consultant, UCL)

Daen Palma Huse (Model)

Dr. Tchern Lenn (Microscopy and Imaging Technician, UCL)

Franccyne Kuser Fegalo (Food Technologist)

Luiza Kessler (Model and Artist)

Louise Carpenedo (Video Recording)

Dr. Marti Ruiz (Research Supervisor)

Nikolas Gomes (Sound Consultant and Composer)

Ramandeep Shergill (Photographer)

Dr. Xueming Xia (Research Instrumentation Technician, UCL)

Currently being developed at Knowledge Lab, UCL, London.